Search Query Mining for B2B SaaS: Turning Query Data Into Product and Messaging Signals

Learn how B2B SaaS teams can turn PPC query data into sharper messaging, better ICP insight, and stronger product-market alignment.

Most SaaS teams open the search terms report, add a few negatives, check for obvious irrelevant traffic, and close it again. The exercise takes twenty minutes and produces a slightly cleaner account. That is about ten percent of the value available in that report.

Search query data is one of the few places in B2B SaaS marketing where buyers tell you, in their own language and without a survey mediating the result, exactly how they think about their problem. The words they use, the categories they search within, the competitors they compare you against, the features they cannot find, the confusion they carry into the buying process. All of that is sitting in the search terms report, and most teams are leaving it on the table.

This article is about treating query mining as a SaaS marketing intelligence workflow rather than a housekeeping task.

What Query Mining Actually Is

Search query mining, in the context it matters for B2B SaaS, is the systematic extraction of buyer language and intent signals from the actual search terms that triggered paid ad impressions. It is distinct from keyword research, which deals with planned terms you bid on. Query mining deals with what buyers actually typed.

The difference is significant. Keyword research uses tools to estimate what buyers probably search for. Query mining reads what they demonstrably did search for, in the language they chose, at a moment when purchase intent was present. That makes it qualitatively different as a source of market intelligence.

For SaaS teams, the signals available in query data fall into five categories: pain language, use-case framing, objection patterns, competitive landscape awareness, and product confusion. Each category has distinct applications for paid media, product marketing, and sometimes product development itself.

The Five Signal Categories

Pain language

Buyers describe their problems in search queries. A query like "reporting too slow for weekly board meetings" does not map to a keyword anyone planned. It is a buyer describing a specific, emotionally charged pain at the moment they are motivated to find a solution.

These long-tail pain queries rarely have meaningful individual search volume, which is why keyword research tools miss them. But they cluster into patterns. If fifty queries over three months describe variations of "reporting taking too long," you have direct evidence that speed of reporting is a pain point resonating with your ICP. That evidence is more reliable than a positioning hypothesis derived from internal discussion, because it reflects what buyers care about enough to search for. Allocating Budget Across Search, Social and Display for B2B SaaS, also on this blog, takes this further.

Pain language from query data is a direct input for headline copy, ad copy, and landing page messaging. The best SaaS landing pages are written in buyer language, not product language. Query mining is one of the most reliable sources of buyer language available to a paid search team.

Use-case framing

Buyers search for solutions within a specific use case context: "project management for remote engineering teams," "invoice automation for freelance agencies," "CRM for early-stage SaaS." These queries reveal how different ICP segments frame their need, which is often different from how the product team frames the product's value.

Use-case queries are particularly valuable for SaaS teams deciding whether to specialise landing pages by vertical or segment. If query data shows significant volume around three or four distinct use-case framings, each of those framings probably warrants its own page rather than a single generic campaign destination.

.jpeg)

Objection patterns

Queries containing modifiers like "without," "no contract," "no credit card," "simple," "easy to use," or "doesn't require IT" are objection signals. The buyer is anticipating a barrier and searching for reassurance that it does not apply. These queries tell you which objections are present in the market at the moment of intent, before the buyer has seen your product or your landing page.

For SaaS teams, objection queries are a direct input for risk-reduction copy. If a cluster of queries around "no implementation fee" appears repeatedly, that concern should be addressed explicitly on the relevant landing page, not left unacknowledged. The page does not need to wait for a CRO test to discover that the objection matters; the query data has already told you it matters.

Competitive landscape awareness

Queries containing competitor names, "[competitor] vs [category]," "[competitor] alternative," or "[competitor] pricing" reveal which competitors your ICP is aware of and actively comparing. This is competitive intelligence collected at the point of purchase intent, not through a survey or an analyst report.

For B2B SaaS marketing strategy, this data answers a question that is otherwise difficult to answer: which competitors are in the active consideration set of the buyers who are actually looking for your product right now? That is more commercially relevant than a brand-awareness survey asking which vendors respondents have heard of.

Competitive query patterns also identify bidding opportunities that the keyword research process may not surface. If a cluster of "[competitor] alternative" queries is triggering your broad match campaigns, that is evidence for building a dedicated competitor capture campaign around that term.

Product confusion

Queries that do not match your product's actual capability, or that reveal misunderstanding about what the product does, are high-value signals that most teams delete as irrelevant. A query that repeatedly triggers your ads but reflects a use case your product does not serve reveals a positioning problem: your campaign messaging is attracting buyers who will not qualify.

The response to product confusion queries is not always to add them as negatives and move on. Sometimes the pattern reveals a category of buyer who would qualify if the product did serve their use case, which is a product roadmap signal. Sometimes it reveals that your ad copy is creating the confusion by being too broad. Sometimes it confirms that a negative keyword list is working correctly. The value is in reading the pattern rather than reflexively excluding the terms.

Separating Signal from Noise

Not every unusual query in the search terms report contains actionable intelligence. The first discipline in query mining is distinguishing noise from signal, and the most reliable separator is pattern frequency combined with conversion context.

A single unusual query is noise. Twenty queries in a month that share a common modifier, topic, or framing are a pattern worth examining. The threshold varies by account size: in higher-volume accounts, meaningful patterns emerge from smaller percentage clusters because absolute numbers are higher. In lower-volume accounts, a pattern of five or six semantically similar queries can still be worth acting on if the intent is sharp.

Conversion context separates commercially relevant patterns from curious but irrelevant ones. A cluster of queries that share a common framing and have above-average conversion rates is a signal that should change something, whether ad copy, landing page, or keyword targeting. A cluster that appears frequently but converts at zero across a meaningful sample is a negative keyword candidate, not a messaging input.

The practical workflow: export the search terms report for a 60-90 day window, filter for queries with at least one impression at meaningful quality, and group semantically similar terms before applying any judgement. The grouping step is what most teams skip, looking at terms individually rather than as clusters, which is why the patterns go unnoticed.

The Query-Mining Workflow

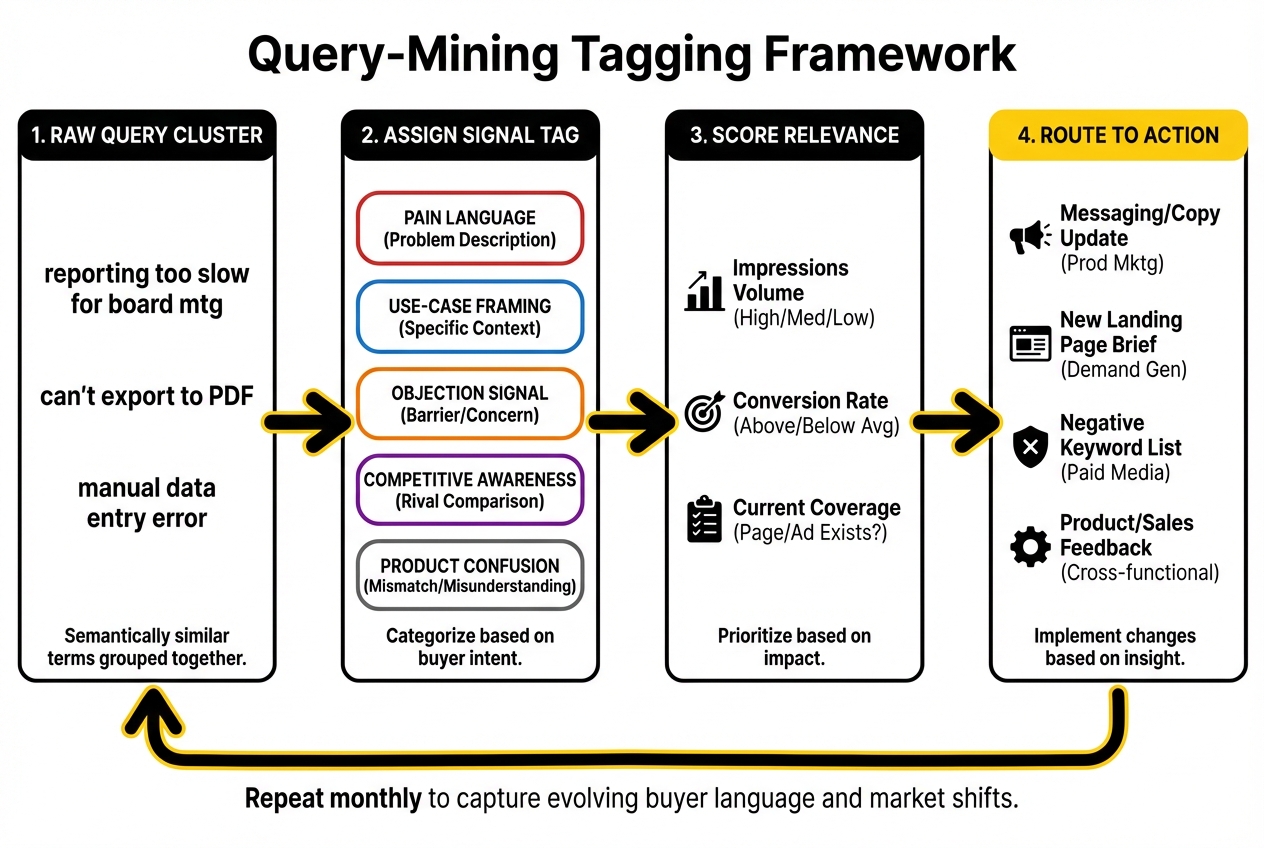

A repeatable query-mining workflow for SaaS PPC teams runs on a monthly cadence and takes roughly two to three hours if the export and tagging process is structured.

Step 1: Export and filter. Pull the search terms report for the prior 30-60 days. Filter for terms with at least one click. Separate branded from non-branded queries at this stage, as they serve different analytical purposes.

Step 2: Tag by signal category. Apply a tag to each query indicating which signal category it represents: pain language, use-case framing, objection, competitive, product confusion, or irrelevant. This step is the core of the workflow. Tagging forces a deliberate reading of each term rather than a scroll looking for obvious negatives. A simple spreadsheet with a tag column is sufficient; no specialist tooling is required.

Step 3: Cluster tagged queries. Group queries by semantic similarity within each tag category. Pain queries about onboarding belong in a different cluster from pain queries about reporting. Use-case queries for agencies belong in a different cluster from use-case queries for internal teams. The clusters reveal the pattern; the individual queries do not.

Step 4: Score by commercial relevance. For each cluster, note the total impressions, the conversion rate (where conversions are tracked), and whether the cluster is already covered by a specific campaign or landing page. High-impression, high-conversion clusters with no dedicated page are the highest-priority findings.

Step 5: Route findings to the right team. Not all query insights belong in the paid media workflow. Pain language patterns should be shared with product marketing for messaging input. Competitive query patterns should be shared with demand gen strategy for campaign decisions. Use-case clusters should be shared with whoever owns landing page development. Product confusion patterns may warrant a conversation with the product marketing team about ICP clarity.

How Query Insights Feed Messaging and Positioning

The highest-value application of query mining in B2B SaaS marketing is not ad optimisation. It is messaging. Specifically, it is the systematic replacement of internal product language with buyer language in ads, landing pages, and campaign positioning.

Most SaaS product messaging is developed internally: product team writes descriptions, marketing team refines them, leadership signs off. The result is language that accurately describes the product but does not necessarily reflect how buyers frame their need. The gap between internal product language and buyer language is where conversion rate problems live.

Query data closes that gap empirically rather than hypothetically. When pain queries cluster around a specific framing that does not appear anywhere in current ad copy or landing pages, that is a testable hypothesis: change the headline to match the buyer framing and measure whether conversion quality improves.

This is a more reliable input for a messaging test than an A/B hypothesis generated internally, because it is grounded in observed buyer behaviour rather than speculation about what might resonate. April Dunford's positioning methodology makes the point that effective positioning starts with understanding how buyers frame their problem before they encounter your product. Query data is one of the few places you can observe that framing at scale, in commercial context.

.jpeg)

Query Mining for Exclusions, Pages, and Content

Beyond messaging, query data has three direct operational applications.

Negative keyword development. Irrelevant and product confusion queries that consistently consume budget without converting are negative keyword candidates. The important discipline here is not to add negatives reflexively for any query that looks irrelevant in isolation. A query that looks like noise individually may be part of a pattern that warrants a dedicated page rather than exclusion. Check the cluster before adding the negative.

Landing page briefs. High-frequency use-case clusters that are currently landing on a generic page are briefs for dedicated landing pages. A cluster of queries framing the product as a solution for a specific industry, team size, or use case, landing on a homepage or generic page, is a clear indicator that a dedicated page would improve conversion quality. The query data provides the brief: the headline, the pain to address, and the objection to pre-empt.

Content and topic ideas. Informational queries that trigger campaigns but do not convert are not wasted data. They reveal what buyers want to learn before committing to evaluation, which is content the SaaS marketing team should be producing for organic and nurture purposes. A cluster of "how to" queries around a specific problem area that your product solves is a content brief as well as a negative keyword for paid campaigns.

Cross-Functional Applications

The commercial value of query mining scales up significantly when the output moves beyond the paid media team. The patterns extracted from search query data are relevant to product marketing, sales enablement, and in some cases product management.

For product marketing, pain language clusters inform messaging frameworks, positioning documents, and sales deck copy. The language buyers use when searching is the language that should appear in category-level positioning.

For sales enablement, objection query patterns reveal the concerns that prospects carry into the sales conversation before your sales team has said a word. If "does it integrate with Salesforce" appears repeatedly as a search query cluster, that integration concern is coming up in sales conversations too. Having a documented and rehearsed answer at the sales stage starts with knowing the question is common at the search stage.

For product management, product confusion query patterns occasionally surface genuine gaps between what the product does and what a commercially viable segment of buyers needs it to do. This is not a daily occurrence, but when a confusion cluster is large enough and commercially relevant enough, it warrants a conversation with the product team rather than just a negative keyword addition.

The budget allocation for demand capture decisions that follow from query mining work best when the query data is already informing which segments and use cases are worth scaling. Without that grounding, budget decisions are made on platform metrics alone rather than on observed buyer intent patterns.

Our SaaS PPC agency runs this workflow as a standard part of account management. If you want to see how query patterns in your account translate into messaging and campaign decisions, it is worth a conversation.

Frequently Asked Questions

What can SaaS teams learn from PPC search query reports?

Beyond irrelevant terms to exclude, search query reports contain pain language that reveals how buyers describe their problems, use-case framing that shows how different ICP segments contextualise their need, objection signals in modifier words like "without" or "no contract," competitive awareness patterns from queries naming rivals, and product confusion signals where buyer intent does not match product capability. Each category has direct applications for messaging, landing pages, exclusions, and cross-functional intelligence.

How do you separate useful query insight from search-term noise?

Pattern frequency is the primary separator. A single unusual query is noise; a cluster of semantically similar queries over 60-90 days is a signal. Conversion context distinguishes commercially relevant patterns from interesting but irrelevant ones: clusters that appear frequently and convert above average should change something in the campaign or page. Clusters that appear frequently and never convert are negative keyword candidates. Reading terms individually rather than as semantic clusters is why most teams miss the patterns.

Which query patterns should influence SaaS messaging?

Pain language clusters that use framing not present in current ad copy or landing pages are the highest-priority messaging inputs. If buyers consistently describe the problem your product solves in language that does not appear in your headlines, that is a testable hypothesis for a messaging change. Use-case framing clusters that suggest a specific segment is arriving with unaddressed context are landing page briefs. Objection clusters are inputs for risk-reduction copy.

Can search query mining improve product positioning?

Yes, when the output is shared cross-functionally. Pain language from query data reflects how buyers frame their problem before encountering the product, which is precisely the input April Dunford's positioning methodology calls for. Use-case clusters reveal which ICP segments are arriving with search intent, which informs ICP definition. Product confusion patterns occasionally surface genuine positioning gaps between the product's current framing and a commercially viable buyer need.

How often should B2B SaaS teams mine search query data?

Monthly is the right cadence for most B2B SaaS accounts. It is frequent enough to catch emerging patterns before they distort spend or create persistent messaging mismatches, and infrequent enough that each session produces meaningful pattern changes rather than marginal updates. Accounts spending above a certain volume may benefit from a fortnightly review of high-spend query clusters. Quarterly is too infrequent: three months of unreviewed query data can represent significant accumulated waste and missed messaging opportunities.

What should be tagged in a SaaS query-mining workflow?

Every query should receive a tag from five categories: pain language, use-case framing, objection signal, competitive awareness, or product confusion. A sixth tag for irrelevant or navigational queries handles terms that should be excluded. The tagging step is the core discipline that distinguishes query mining from search-term pruning. Without it, patterns across semantically similar terms remain invisible because they are never viewed as a group.

How do query insights improve landing pages and ads?

Query insights improve landing pages by identifying pain framing and use-case contexts that are currently unaddressed on the destination page, creating conversion friction for visitors whose intent does not match the page. They improve ads by supplying buyer language for headlines and descriptions that is more resonant than internally generated product language. In both cases, the mechanism is the same: replacing assumptions about what buyers care about with evidence from what buyers demonstrably searched for.

When should query mining lead to new exclusions versus new messaging tests?

New exclusions are appropriate when a query cluster consistently consumes budget without converting across a meaningful sample, and when the intent does not plausibly map to any use case the product serves. New messaging tests are appropriate when a query cluster represents intent the product could serve, but the current page or ad copy fails to acknowledge the buyer's framing. The distinction requires reading the cluster in context rather than adding terms to a negative list reflexively. Some of the most valuable query patterns initially look like irrelevant traffic and turn out to be under-served use cases.

Query data is one of the most underused assets in B2B SaaS marketing. If your team treats it as a monthly negative keyword task, there is almost certainly commercial intelligence sitting in those reports that is not making it into your messaging, your pages, or your product marketing conversations. That is the kind of audit we run with SaaS teams regularly.